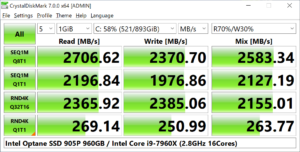

04:11 PM 15,832 Niemann_replacement_selection+polyphase_merge_sort.c 04:11 PM 1,631 MokujIN GREEN 224 prompt.lnk 05:13 PM 1,138 GENERATE_Xmillion_Knight-Tours_and_SORT_them_64bit.bat 04:11 PM 1,138 GENERATE_Xmillion_Knight-Tours_and_SORT_them.bat These days I intend to buy the 256GB model and put it to the test, at the moment I can only test my latest SSD - the cheapest Kingston - A400 2.5" 240GB SATA III TLC:ĭ:\Sandokan_r3+_vs_Windows-sort_vs_TN-sort>dir It prompts for using much bigger 'Test Size' than the default 1GiB, otherwise stressing the cache mainly, which is also useful. Since the Japanese author didn't report the IOPS, manually I have to divide 4KiBQ1T1 score by 4KiB. across 48GB big and more pool ('Test Size' in CrystalDiskMark terminology). The scenario that interests me is the actual performance of modern SSDs when the application (single-threaded) starts bombarding the drive with small packets like 128B/4KiB. To check this try running a memory benchmark/test and checking BIOS settings.Speaking of virtual memory performance, my wish is to collect useful statistics on IOPS random 4KiB read/write/read-write. Primo Ramdisk is very reliant on memory performance so any issue with memory setup can have an effect (in Viktor11's case, NUMA settings may come into play).

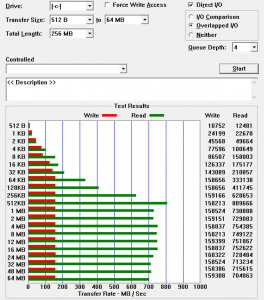

#QUEUES VS THREADS CRYSTAL DISK MARK SOFTWARE#

an overly-aggressive virus/file scanner), so try using software like Process Explorer or Process Hacker to monitor CPU utilisation and disk I/O during a benchmark run, to check that no other processes are taking up significant CPU or I/O. There may be other software interfering with disk access (e.g. In both Viktor11's and SysVR's cases (welcome to the forums, both of you!) the ratio appears to be greater than expected. In my experience, the difference between best/worst case performance can vary by a factor of 450 with hard discs, 15-20 with SSDs and 7-8 with ramdisks/PrimoCached volumes. Sequential access tends to be faster than random access whatever the medium for different reasons (for hard discs, it's the time taken to move the head to a new track, for RAM the time taken to fire up the appropriate Row/Column Address Strobes). The smaller the block size, the slower it gets.ĬrystalDiskMark 8.0.2 圆4 (C) 2007-2021 hiyohiyo The default size of the program's file stream buffer is 4KiB (4,096B) units, so the block size is 4KiB, the same as DiskMark. You can see this by trying to copy files in a RAM disk. Since the number of queues is one read/write instruction (basically one user application), the actual experience is slow. When the block size (buffer) is 1MiB, the processing is done in multi-threading based on the number of queues, but when the block size is 4KiB, the processing is done in single-threading regardless of the number of queues. If you want to test it, it would be easier for Primo to use Microsoft DiskSpd for command line (batch) processing. Note that crystal disk mark is a front-end to Microsoft DiskSpd, so it is not possible to use The disk controller was multi-threaded for SEQ1M and single-threaded for the rest. SEQ1M was multi-threaded, while the others were single-threaded. When I ran the same test with crystal disk mark, I found that the disk controller was multithreaded for SEQ1M and I ran the same test on crystal disk mark.

#QUEUES VS THREADS CRYSTAL DISK MARK DRIVER#

(It's probably a problem on the driver side, but it could also be a problem on the Windows or DiskSpd side.)

There is a issue with the disk controller?